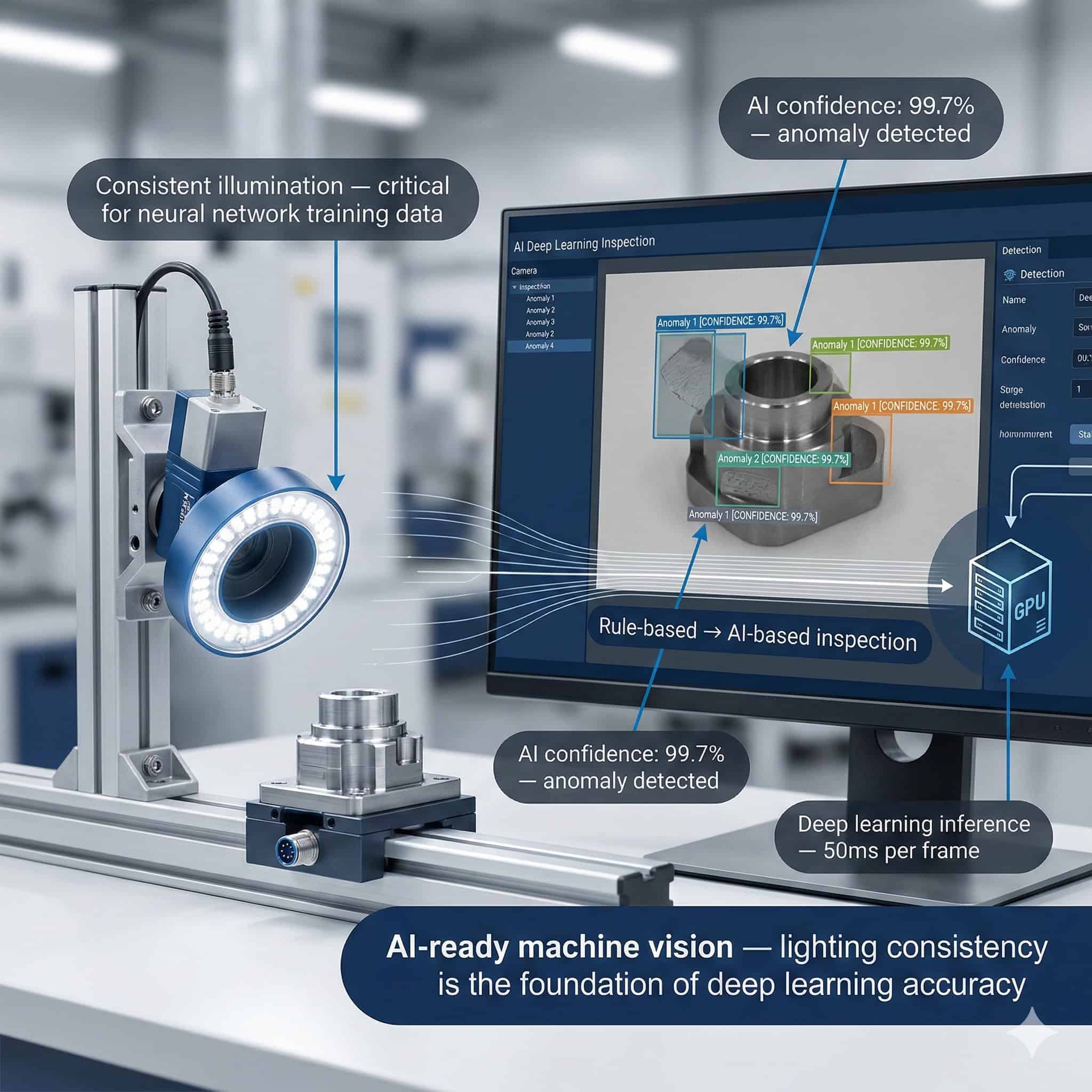

The rise of deep learning-based machine vision is one of the most significant shifts in industrial inspection of the past decade. Neural networks trained on thousands of images can now detect surface defects, verify assembly presence, read damaged barcodes, and classify materials with accuracy that often surpasses traditional rule-based algorithms. Yet despite this leap in software intelligence, the fundamental physics of light and optics remain unchanged. How an industrial camera captures an image still depends entirely on the quality, consistency, and geometry of the illumination in front of it.

Understanding the relationship between AI-powered vision and illumination design is essential for any engineer or integrator building modern inspection systems. Deep learning does not eliminate the need for good lighting — in many cases, it amplifies it.

Traditional Rule-Based Vision vs. Deep Learning Inspection

Classical machine vision systems operate by defining explicit rules: if a pixel value falls below a threshold, flag a defect; if an edge deviates from a reference geometry, trigger a rejection. These systems are fast, deterministic, and highly effective for well-constrained problems. However, they are brittle — any change in lighting angle, intensity, or colour temperature can invalidate the entire inspection logic, forcing engineers to recalibrate from scratch.

Deep learning approaches operate differently. A convolutional neural network learns to associate image features with inspection outcomes through exposure to large labelled datasets. It can generalise across minor variations and recognise complex patterns that would be impossible to encode manually. This makes deep learning particularly powerful for texture-based defect detection, anomaly recognition in complex assemblies, and appearance verification tasks where the acceptable range is wide and hard to define analytically.

Do you want help choosing the product?

Why Lighting Consistency Matters Even More for AI Systems

A common misconception is that deep learning models are inherently robust to lighting variation. In a factory context, the model is not asked to recognise a general category — it is asked to discriminate between a conforming product and a non-conforming one that may differ by fractions of a millimetre, a surface micro-crack, or a subtle colour shift. These differences are only visible if the illumination renders them with sufficient contrast and repeatability. A model trained under one lighting condition and deployed under a different one will encounter a shifted input distribution that may cause it to miss defects or generate false positives at an unacceptable rate.

This is why lighting engineers working alongside AI developers must treat the illuminator as a first-class component of the system architecture. The LED illuminator must deliver the same spectral output, the same spatial uniformity, and the same intensity at every acquisition cycle, whether the system is running its first image or its ten-millionth.

Thermal Stability and Long-Term Photometric Consistency

LED illuminators naturally experience a shift in luminous flux as junction temperature rises. For a traditional rule-based system, a 5% drop in brightness might go unnoticed. For a deep learning model trained at a specific brightness level, this shift represents a covariate change that can silently degrade performance over weeks or months without triggering any obvious alarm. Industrial-grade illuminators designed with active thermal management maintain stable output over the product lifetime, preserving the photometric conditions under which the model was trained.

Building AI-Ready Training Datasets: The Illumination Strategy

A deep learning model is only as good as the data used to train it. In industrial vision, this means acquiring images that faithfully represent the full range of product appearance and defect types that the system will encounter in production. The illumination configuration used during data collection directly determines what features are visible in the training images and, consequently, what the model learns to detect.

Wavelength Selection and Feature Visibility

Different LED wavelengths emphasise different surface characteristics. Blue and UV light reveal surface contamination and micro-cracks on metals that are invisible under white illumination. Red light penetrates translucent materials and reduces the visibility of surface blemishes that could otherwise confuse a classifier. Near-infrared illumination suppresses colour variation and enhances structural features beneath painted or coated surfaces. The choice of illumination wavelength when building a training dataset is a data engineering decision that directly shapes what the model can and cannot learn to detect.

Multi-Illumination Datasets and Data Augmentation

Advanced practitioners build training datasets that intentionally capture the same part under multiple illumination setups — brightfield, darkfield, coaxial, and diffuse — generating multi-channel image stacks that give the neural network richer information per inspection cycle. While this increases the complexity of the acquisition hardware, it can dramatically reduce the number of labelled samples required for the model to converge, and improves generalisation to novel defect appearances.

Software-side data augmentation — simulating brightness shifts, contrast changes, and noise — is a standard technique for expanding dataset size, but it cannot substitute for genuine photometric diversity captured in the real acquisition environment. Augmentation works best as a complement to a robust physical illumination strategy.

Structured Light and 3D Deep Learning

A growing segment of deep learning inspection deployments operates on three-dimensional point clouds or depth maps rather than 2D images. Structured light projectors and laser triangulation systems generate these 3D representations by projecting controlled patterns of light onto the inspection target and computing surface geometry from the observed deformations. Deep learning models trained on 3D data can detect warpage, dimensional deviation, and surface topology defects that are invisible in standard 2D images. The illumination requirements for 3D systems are even more stringent: ambient light must be rigorously controlled to prevent interference with the projected pattern, and the projector must maintain stable power output across the full operational temperature range.

Practical Implications for Machine Vision System Design

Engineers designing AI-powered inspection cells should approach illumination selection with the same rigour applied to camera and optics selection. Key considerations include:

- Long-term photometric stability: select illuminators with active thermal management and guaranteed lumen maintenance over the expected service life.

- Spectral precision: choose the LED wavelength that maximises the contrast of the features the model must detect, not simply the wavelength that produces the brightest image.

- Geometric repeatability: ensure the illuminator is mechanically fixed relative to the inspection target and camera, so that the illumination angle does not drift between maintenance cycles.

- Strobe synchronisation: use pulsed illumination with hardware trigger synchronisation to freeze motion and ensure every image is acquired under identical exposure conditions.

- Ambient light rejection: high-intensity LED illuminators with narrow-angle optics suppress ambient contributions without requiring a fully enclosed inspection cell.

The RODER Vision Perspective: AI-Ready Illumination

At RODER Vision, every illuminator in the product range is engineered with the stability and repeatability that AI-powered inspection systems demand. The HTTM (High Thermal Transfer Module) technology embedded in RODER illuminators actively dissipates heat away from the LED junction, maintaining consistent photometric output across the full operating temperature range. This is not simply a component reliability feature — it is a prerequisite for building machine learning systems that remain accurate over multi-year production runs.

The availability of multiple LED wavelengths across every product family — from UV through visible to near-infrared — allows vision engineers to select the precise spectral configuration that maximises the discriminative power of the training dataset. Modular form factors and custom-length configurations ensure that the illuminator geometry can be adapted to any inspection cell layout without compromising photometric performance.

As deep learning continues to expand its footprint in industrial inspection, the role of illumination engineering becomes more strategic, not less. The most capable neural network cannot compensate for inconsistent, unstable, or poorly designed lighting. The quality of the light source determines the quality of the data, and the quality of the data determines the quality of the model. Building AI-ready illumination is not an option — it is the foundation on which reliable deep learning inspection is built.

Products and Technologies

RODER Vision Illuminator Families for AI-Based Inspection Systems

Selecting the right illuminator family is a critical step when designing an AI-powered inspection cell. The following RODER Vision product families are particularly well suited for deep learning applications, offering the photometric stability, spectral flexibility, and integration options that modern AI systems require.

DL6 — High Density LED Matrix

High-density matrix for uniform brightfield illumination. Multi-wavelength (UV to NIR). Ideal for AI datasets on flat surfaces and PCBs.

BL3 — LED Backlight Illuminators

High-uniformity backlights for silhouette and profile inspection. Essential for edge detection and transparent part datasets.

DC6 — High Density LED Ring

Uniform on-axis ring illumination for circular parts. Suited for AI connector, cap and pin inspection with 360° coverage.

FD2 — Flat Dome LED Illuminators

Ultra-diffuse shadowless illumination. Eliminates reflections on glossy or curved surfaces. Ideal for AI classifiers on reflective parts.

Frequently Asked Questions

No. Deep learning models learn from image data, and the quality of that data depends directly on illumination consistency and contrast. A model trained under one lighting condition will perform poorly if deployed under different lighting, making stable, well-designed illumination a prerequisite for reliable AI-based inspection.

The optimal wavelength is the one that maximises the visual contrast of the specific features the model must detect. UV and blue light reveal surface cracks on metals, red light penetrates translucent materials, and near-infrared suppresses surface colour variation. Wavelength selection is a data engineering decision.

As LED junction temperature rises, luminous flux decreases. This gradual shift can silently degrade model accuracy over time without triggering obvious alarms. Illuminators with active thermal management maintain stable output and preserve the photometric conditions under which the model was trained.

Capturing the same inspection target under multiple illumination setups generates richer training data per sample, reducing the number of labelled images needed for convergence and improving the model’s ability to detect novel defect appearances.

Strobe illumination should be hardware-synchronised with the camera trigger to ensure every training image is acquired under identical exposure conditions, eliminating motion blur variability and photometric variation between frames.

Contacts & Information

Contact for general information : info@roder.it

Systems and Sensor Integration Partner : www.roder.it

RODER Artificial Vision Division : www.rodervision.com

RODER Instruments Division : www.innovacheck.com

More information about RODER VISION : about us

The information on this website is provided for informational purposes only. Although it has been prepared with the utmost care, it does not constitute a contractual offer or a binding commitment to supply. It may contain transcription, translation, or typographical errors. For precise and up-to-date information, please contact our company directly.

Please note: Some images on this website have been intentionally generated using Artificial Intelligence (AI). This is due to the fact that, for many applications and projects, it is not possible to disclose photographs of the actual installation or system due to confidentiality agreements, contractual clauses, and Non-Disclosure Agreements (NDAs).